- Blog

- Particle playground particles getting stuck in air

- S3 smartday

- Lajmet panorama

- Ifart free app online

- Microsoft word build service packs

- Lambda startup time

- Diamond hands to the moon emoji

- Todoist icon

- Free texting apps for laptops

- Dustforce dx steam

- Invisible emmie publisher

- I had an aha moment

Downloading the model from s3 on each invocation could take between 15–20 seconds.

Run the function’s handler method/function.

LAMBDA STARTUP TIME CODE

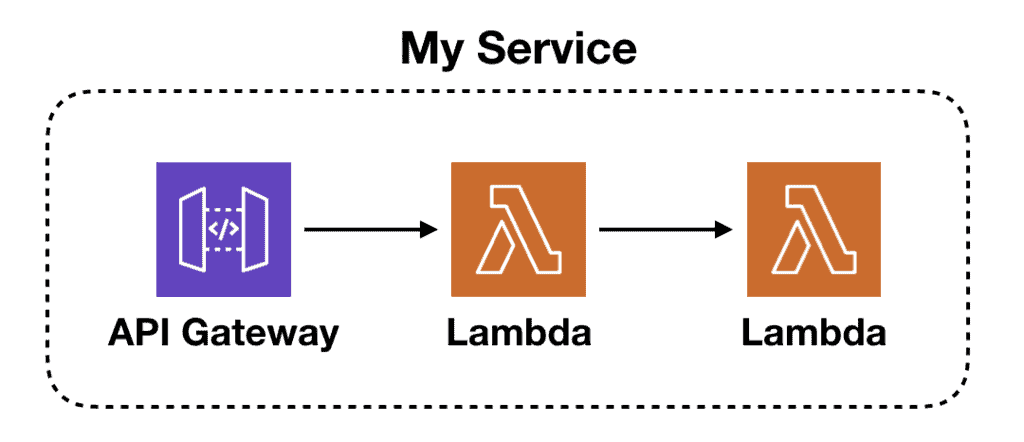

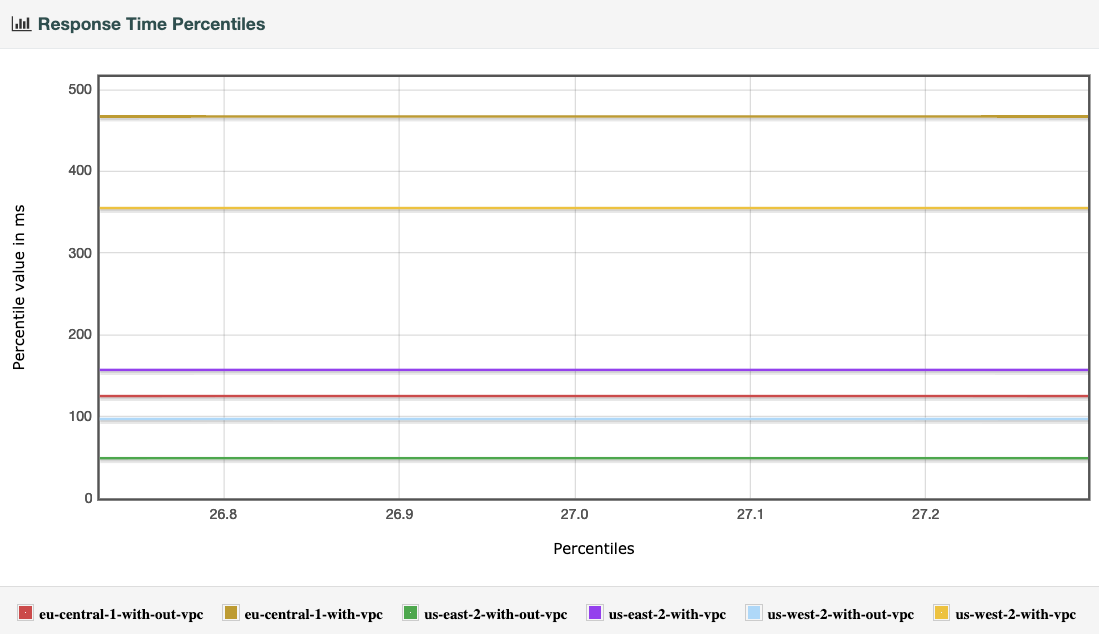

Get and load the package containing the lambda code from external persistent storage (e.g.When a container starts from a cold state, the function needs to: What is a cold start?Ī cold start occurs when the container is shut down and then has to be restarted when the lambda function is invoked, typically this happens after ~5 mins of inactivity.Ī lot has already been written about cold starts, so this article won’t provide a detailed guide (I recommend you check out this article for that). This article isn't to try and put you off running ad hoc NLP pipelines in lambda, on the contrary, it hopes to help make you aware of these constraints and some ways to overcome them (or alternatively, help you to live on the edge by choosing to ignore from a place of understanding). This issue is compounded when your lambda function is in fact an API endpoint which clients can call on an ad-hoc basis to trigger some real-time data sciencey/analytics process (in our case NLP on a single phrase of arbitrary length), as that window of 15 mins Lambda run time suddenly gets slashed to a 30-second window to return an HTTP response to the client. AWS Lambda only allows a maximum of 15 mins runtime before the function times out and the container dies, confining all un-persisted work (and running computations) to the graveyard of dead containers never to be restarted.

LAMBDA STARTUP TIME DOWNLOAD

Start a download of 200 GBs of text data (put the kettle on), load a model (poor the tea), run a clustering algorithm (go for a walk)… sound familiar? But whilst this slow pace is fine for experimentation (and productionised systems running on persistent servers), it is potentially fatal for the serverless pattern. That’s the problem, how slow exactly depends on various factors such as your runtime (python is actually one of the quicker lambda containers to start), and what you’re doing in the setup phase of the Lambda ( source).Īs data scientists and developers, we’re used to a gentle pace. Why do cold starts hurt data science applications?Ĭold starts are slow.

LAMBDA STARTUP TIME FREE

Of course, by virtue of the ‘no free lunch’ mantra this convenience and cost savings has to come at a price, and whilst there are numerous real costs to serverless (memory constraints, package size, run time restrictions, developer learning curve) this article is going to assume that you’ve already solved those (or don’t care about them) and instead focus on a specific performance cost - cold starts. Negligible running costs, no server configuration to take up your time, auto-scaling by default etc. If you’re anything like me, you think that serverless is great.